ToTensor in PyTorch

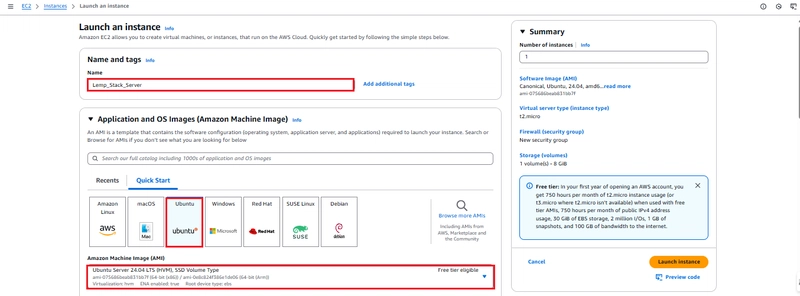

Buy Me a Coffee☕ *Memos: My post explains how to convert and scale a PIL Image to an Image in PyTorch. My post explains Compose(). My post explains ToImage(). My post explains ToDtype() about scale=True. My post explains PILToTensor(). My post explains ToPILImage(). My post explains OxfordIIITPet(). ToTensor() can convert a PIL(Pillow library) Image([H, W, C]), Image([..., C, H, W]) or ndarray to a tensor([C, H, W]) and scale the values of a PIL Image or ndarray to [0.0, 1,0] as shown below: *Memos: ToTensor() is deprecated so instead use Compose(transforms=[ToImage(), ToDtype(dtype=torch.float32, scale=True)]) according to the doc. A PIL Image is scaled to [0.0, 1.0] if the PIL Image belongs to one of the modes (L, LA, P, I, F, RGB, YCbCr, RGBA, CMYK, 1). A ndarray is scaled to [0.0, 1.0] if the ndarray is uint8 which is [0, 255]. The 1st argument is img(Required-Type:PIL Image, Image or tensor/ndarray(int/float/complex/bool)): *Memos: A tensor can be 0D or more D. A ndarray must be 2D or 3D. Don't use img=. v2 is recommended to use according to V1 or V2? Which one should I use?. from torchvision.datasets import OxfordIIITPet from torchvision.transforms.v2 import ToImage, ToTensor import torch import numpy as np ToTensor() # ToTensor() PILImage_data = OxfordIIITPet( root="data", transform=None ) Image_data = OxfordIIITPet( root="data", transform=ToImage() ) Tensor_data = OxfordIIITPet( root="data", transform=ToTensor() ) Tensor_data # Dataset OxfordIIITPet # Number of datapoints: 3680 # Root location: data # StandardTransform # Transform: ToTensor() Tensor_data[0] # (tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765], # [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765], # ..., # [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]], # [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725], # [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725], # ..., # [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]], # [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922], # [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922], # ..., # [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]]), 0) Tensor_data[0][0].size() # torch.Size([3, 500, 394]) Tensor_data[0][0] # tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765], # [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765], # ..., # [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]], # [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725], # [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725], # ..., # [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]], # [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922], # [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922], # ..., # [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]]) Tensor_data[0][1] # 0 import matplotlib.pyplot as plt plt.imshow(X=Tensor_data[0][0]) # TypeError: Invalid shape (3, 500, 394) for image data tt = ToTensor() tt(PILImage_data) # It's still PIL Image. # Dataset OxfordIIITPet # Number of datapoints: 3680 # Root location: data tt(PILImage_data[0]) # (tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765], # [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765], # ..., # [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]], # [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725], # [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725], # ..., # [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]], # [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922], # [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922], # ..., # [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]]), 0) tt(PILImage_data[0][0]) # tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765], # [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765], # ..., # [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]], # [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725], # [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725], # ..., # [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]], # [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922], # [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922], # ..., # [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]]) tt(Image_data) # It's still `ToImage()`. # Dataset OxfordIIITPet # Number of datapoints: 3680 # Root location: data # StandardTransform # Transform: ToImage() tt(Image_data[0]) # (Image([[[37, 35, 36, ..., 247, 249, 249], # [35, 35, 37, ..., 246, 248, 249], # ..., # [28, 2

*Memos:

- My post explains how to convert and scale a PIL Image to an Image in PyTorch.

- My post explains Compose().

- My post explains ToImage().

-

My post explains ToDtype() about

scale=True. - My post explains PILToTensor().

- My post explains ToPILImage().

- My post explains OxfordIIITPet().

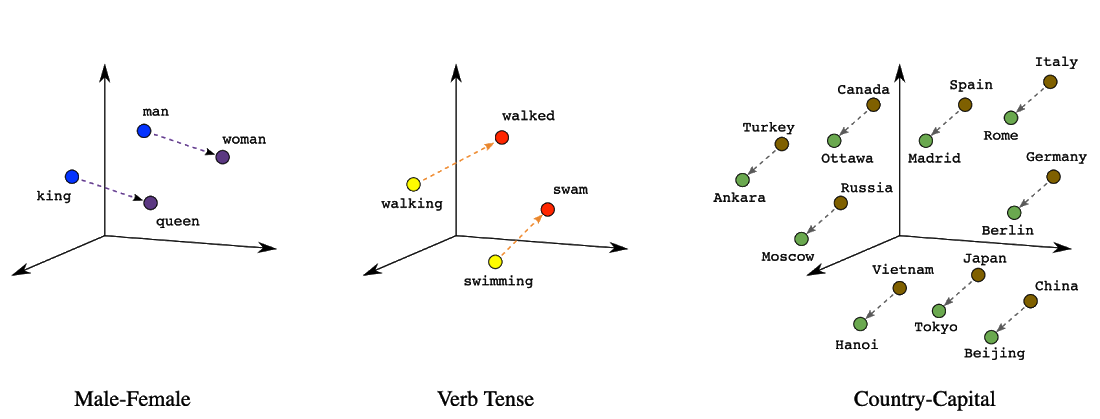

ToTensor() can convert a PIL(Pillow library) Image([H, W, C]), Image([..., C, H, W]) or ndarray to a tensor([C, H, W]) and scale the values of a PIL Image or ndarray to [0.0, 1,0] as shown below:

*Memos:

-

ToTensor()is deprecated so instead useCompose(transforms=[ToImage(), ToDtype(dtype=torch.float32, scale=True)])according to the doc. - A PIL Image is scaled to

[0.0, 1.0]if the PIL Image belongs to one of the modes (L, LA, P, I, F, RGB, YCbCr, RGBA, CMYK, 1). - A ndarray is scaled to

[0.0, 1.0]if the ndarray isuint8which is[0, 255]. - The 1st argument is

img(Required-Type:PIL Image, Image ortensor/ndarray(int/float/complex/bool)): *Memos:- A tensor can be 0D or more D.

- A ndarray must be 2D or 3D.

- Don't use

img=.

-

v2is recommended to use according to V1 or V2? Which one should I use?.

from torchvision.datasets import OxfordIIITPet

from torchvision.transforms.v2 import ToImage, ToTensor

import torch

import numpy as np

ToTensor()

# ToTensor()

PILImage_data = OxfordIIITPet(

root="data",

transform=None

)

Image_data = OxfordIIITPet(

root="data",

transform=ToImage()

)

Tensor_data = OxfordIIITPet(

root="data",

transform=ToTensor()

)

Tensor_data

# Dataset OxfordIIITPet

# Number of datapoints: 3680

# Root location: data

# StandardTransform

# Transform: ToTensor()

Tensor_data[0]

# (tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765],

# [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765],

# ...,

# [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]],

# [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725],

# [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725],

# ...,

# [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]],

# [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922],

# [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922],

# ...,

# [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]]), 0)

Tensor_data[0][0].size()

# torch.Size([3, 500, 394])

Tensor_data[0][0]

# tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765],

# [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765],

# ...,

# [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]],

# [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725],

# [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725],

# ...,

# [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]],

# [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922],

# [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922],

# ...,

# [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]])

Tensor_data[0][1]

# 0

import matplotlib.pyplot as plt

plt.imshow(X=Tensor_data[0][0])

# TypeError: Invalid shape (3, 500, 394) for image data

tt = ToTensor()

tt(PILImage_data) # It's still PIL Image.

# Dataset OxfordIIITPet

# Number of datapoints: 3680

# Root location: data

tt(PILImage_data[0])

# (tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765],

# [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765],

# ...,

# [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]],

# [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725],

# [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725],

# ...,

# [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]],

# [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922],

# [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922],

# ...,

# [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]]), 0)

tt(PILImage_data[0][0])

# tensor([[[0.1451, 0.1373, 0.1412, ..., 0.9686, 0.9765, 0.9765],

# [0.1373, 0.1373, 0.1451, ..., 0.9647, 0.9725, 0.9765],

# ...,

# [0.1098, 0.1098, 0.1059, ..., 0.2314, 0.2549, 0.2980]],

# [[0.0784, 0.0706, 0.0745, ..., 0.9725, 0.9725, 0.9725],

# [0.0706, 0.0706, 0.0784, ..., 0.9686, 0.9686, 0.9725],

# ...,

# [0.1059, 0.1059, 0.1059, ..., 0.3686, 0.4157, 0.4588]],

# [[0.0471, 0.0392, 0.0431, ..., 0.9922, 0.9922, 0.9922],

# [0.0392, 0.0392, 0.0471, ..., 0.9843, 0.9882, 0.9922],

# ...,

# [0.1373, 0.1373, 0.1373, ..., 0.8392, 0.9098, 0.8745]]])

tt(Image_data) # It's still `ToImage()`.

# Dataset OxfordIIITPet

# Number of datapoints: 3680

# Root location: data

# StandardTransform

# Transform: ToImage()

tt(Image_data[0])

# (Image([[[37, 35, 36, ..., 247, 249, 249],

# [35, 35, 37, ..., 246, 248, 249],

# ...,

# [28, 28, 27, ..., 59, 65, 76]],

# [[20, 18, 19, ..., 248, 248, 248],

# [18, 18, 20, ..., 247, 247, 248],

# ...,

# [27, 27, 27, ..., 94, 106, 117]],

# [[12, 10, 11, ..., 253, 253, 253],

# [10, 10, 12, ..., 251, 252, 253],

# ...,

# [35, 35, 35, ..., 214, 232, 223]]], dtype=torch.uint8,), 0)

tt(Image_data[0][0])

# Image([[[37, 35, 36, ..., 247, 249, 249],

# [35, 35, 37, ..., 246, 248, 249],

# ...,

# [28, 28, 27, ..., 59, 65, 76]],

# [[20, 18, 19, ..., 248, 248, 248],

# [18, 18, 20, ..., 247, 247, 248],

# ...,

# [27, 27, 27, ..., 94, 106, 117]],

# [[12, 10, 11, ..., 253, 253, 253],

# [10, 10, 12, ..., 251, 252, 253],

# ...,

# [35, 35, 35, ..., 214, 232, 223]]], dtype=torch.uint8,)

plt.imshow(X=tt(PILImage_data[0][0]))

plt.imshow(X=tt(Image_data[0][0]))

# TypeError: Invalid shape (3, 500, 394) for image data

tt((torch.tensor(3), 0)) # int64

tt((torch.tensor(3, dtype=torch.int64), 0))

# (tensor(3), 0)

tt(torch.tensor(3))

# tensor(3)

tt((torch.tensor([0, 1, 2, 3]), 0))

# (tensor([0, 1, 2, 3]), 0)

tt(torch.tensor([0, 1, 2, 3]))

# tensor([0, 1, 2, 3])

tt((torch.tensor([[0, 1, 2, 3]]), 0))

# (tensor([[0, 1, 2, 3]]), 0)

tt(torch.tensor([[0, 1, 2, 3]]))

# tensor([[0, 1, 2, 3]])

tt((torch.tensor([[[0, 1, 2, 3]]]), 0))

# (tensor([[[0, 1, 2, 3]]]), 0)

tt(torch.tensor([[[0, 1, 2, 3]]]))

# tensor([[[0, 1, 2, 3]]])

tt((torch.tensor([[[[0, 1, 2, 3]]]]), 0))

# (tensor([[[[0, 1, 2, 3]]]]), 0)

tt(torch.tensor([[[[0, 1, 2, 3]]]]))

# tensor([[[[0, 1, 2, 3]]]])

tt((torch.tensor([[[[[0, 1, 2, 3]]]]]), 0))

# (tensor([[[[[0, 1, 2, 3]]]]]), 0)

tt(torch.tensor([[[[[0, 1, 2, 3]]]]]))

# tensor([[[[[0, 1, 2, 3]]]]])

tt((torch.tensor([[0, 1, 2, 3]], dtype=torch.int32), 0))

# (tensor([[0, 1, 2, 3]], dtype=torch.int32), 0)

tt((torch.tensor([[0, 1, 2, 3]], dtype=torch.uint8), 0))

# (tensor([[0, 1, 2, 3]], dtype=torch.uint8), 0)

tt((torch.tensor([[0., 1., 2., 3.]]), 0)) # float32

tt((torch.tensor([[0., 1., 2., 3.]], dtype=torch.float32), 0))

# (tensor([[0., 1., 2., 3.]]), 0)

tt((torch.tensor([[0., 1., 2., 3.]], dtype=torch.float64), 0))

# (tensor([[0., 1., 2., 3.]], dtype=torch.float64), 0)

tt((torch.tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]]), 0)) # complex64

tt((torch.tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]], dtype=torch.complex64), 0))

# (tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]]), 0)

tt((torch.tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]], dtype=torch.complex32), 0))

# (tensor([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]], dtype=torch.complex32), 0)

tt((torch.tensor([[True, False, True, False]]), 0)) # bool

tt((torch.tensor([[True, False, True, False]], dtype=torch.bool), 0))

# (tensor([[True, False, True, False]]), 0)

tt((np.array([[0, 1, 2, 3]]), 0)) # int32

tt((np.array([[0, 1, 2, 3]], dtype=np.int32), 0))

# (tensor([[[0, 1, 2, 3]]], dtype=torch.int32), 0)

tt((np.array([[[0, 1, 2, 3]]]), 0)) # int32

# (tensor([[[0]], [[1]], [[2]], [[3]]], dtype=torch.int32), 0)

tt((np.array([[0, 1, 2, 3]], dtype=np.int64), 0))

# (tensor([[[0, 1, 2, 3]]]), 0)

tt((np.array([[0, 1, 2, 3]], dtype=np.uint8), 0))

# (tensor([[[0.0000, 0.0039, 0.0078, 0.0118]]]), 0)

tt((np.array([[0, 1, 2, 3]], dtype=np.uint16), 0))

# (tensor([[[0, 1, 2, 3]]], dtype=torch.uint16), 0)

tt((np.array([[0., 1., 2., 3.]]), 0)) # float64

tt((np.array([[0., 1., 2., 3.]], dtype=np.float64), 0))

# (tensor([[[0., 1., 2., 3.]]], dtype=torch.float64), 0)

tt((np.array([[0., 1., 2., 3.]], dtype=np.float32), 0))

# (tensor([[[0., 1., 2., 3.]]]), 0)

tt((np.array([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]]), 0)) # complex128

tt((np.array([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]], dtype=np.complex128), 0))

# (tensor([[[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]]], dtype=torch.complex128), 0)

tt((np.array([[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]], dtype=np.complex64), 0))

# (tensor([[[0.+0.j, 1.+0.j, 2.+0.j, 3.+0.j]]]), 0)

tt((np.array([[True, False, True, False]]), 0)) # bool

tt((np.array([[True, False, True, False]], dtype=bool), 0))

# (tensor([[[ True, False, True, False]]]), 0)

![Apple Smart Glasses Not Close to Being Ready as Meta Targets 2025 [Gurman]](https://www.iclarified.com/images/news/97139/97139/97139-640.jpg)

![iPadOS 19 May Introduce Menu Bar, iOS 19 to Support External Displays [Rumor]](https://www.iclarified.com/images/news/97137/97137/97137-640.jpg)

![Apple Drops New Immersive Adventure Episode for Vision Pro: 'Hill Climb' [Video]](https://www.iclarified.com/images/news/97133/97133/97133-640.jpg)

CISO’s Core Focus.webp?#)

_Olekcii_Mach_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

![[The AI Show Episode 144]: ChatGPT’s New Memory, Shopify CEO’s Leaked “AI First” Memo, Google Cloud Next Releases, o3 and o4-mini Coming Soon & Llama 4’s Rocky Launch](https://www.marketingaiinstitute.com/hubfs/ep%20144%20cover.png)

![[DEALS] Koofr Cloud Storage: Lifetime Subscription (1TB) (80% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

-The-Elder-Scrolls-IV-Oblivion-Remastered---Official-Reveal-00-18-14.png?width=1920&height=1920&fit=bounds&quality=70&format=jpg&auto=webp#)

.jpg?width=1920&height=1920&fit=bounds&quality=70&format=jpg&auto=webp#)