ViSMaP: Unsupervised Summarization of Hour-Long Videos Using Meta-Prompting and Short-Form Datasets

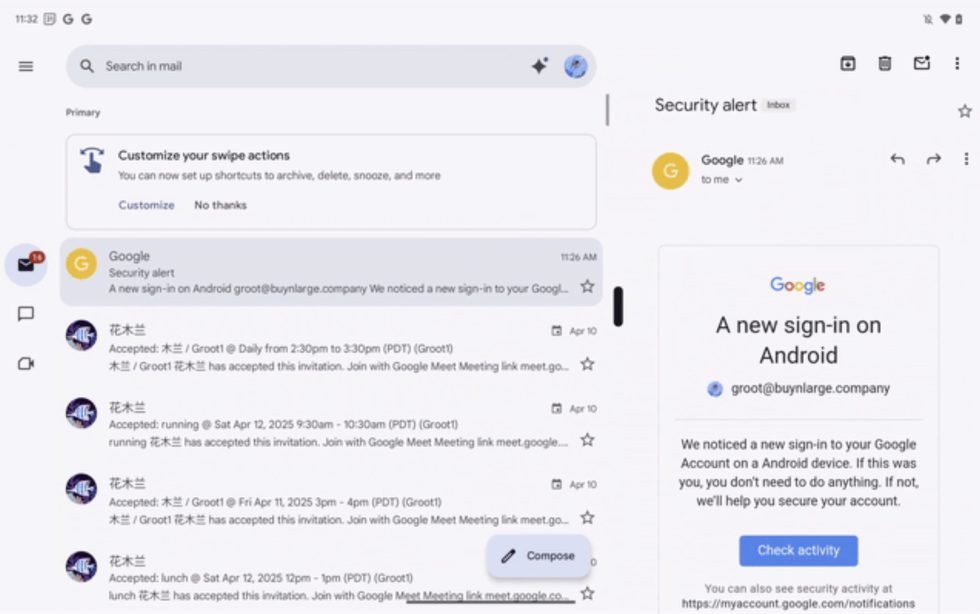

Video captioning models are typically trained on datasets consisting of short videos, usually under three minutes in length, paired with corresponding captions. While this enables them to describe basic actions like walking or talking, these models struggle with the complexity of long-form videos, such as vlogs, sports events, and movies that can last over an […] The post ViSMaP: Unsupervised Summarization of Hour-Long Videos Using Meta-Prompting and Short-Form Datasets appeared first on MarkTechPost.

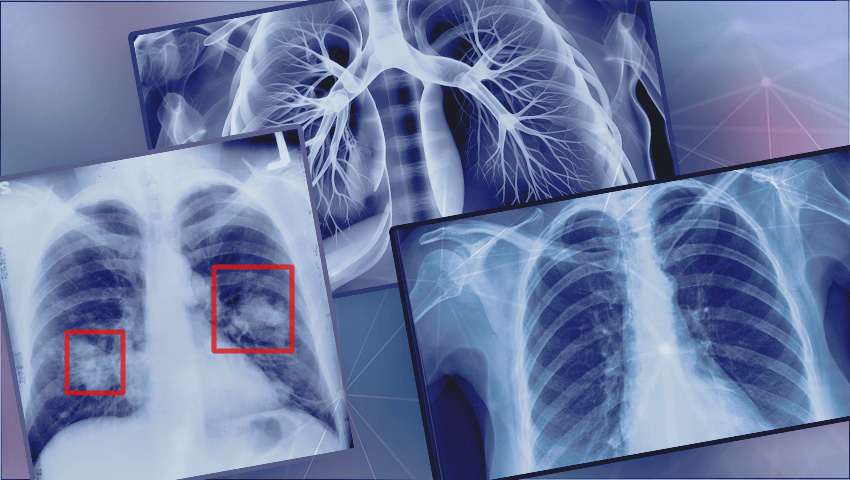

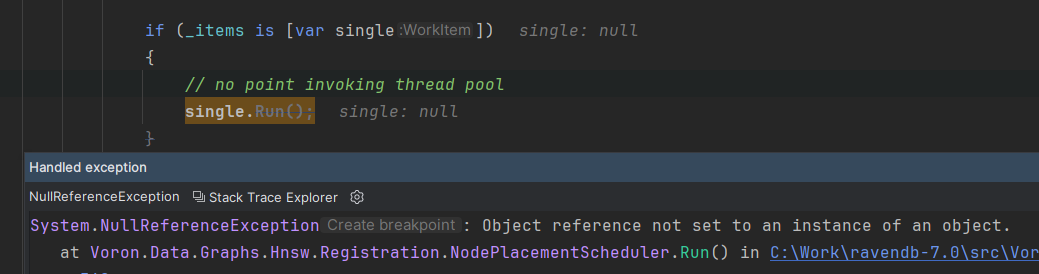

Video captioning models are typically trained on datasets consisting of short videos, usually under three minutes in length, paired with corresponding captions. While this enables them to describe basic actions like walking or talking, these models struggle with the complexity of long-form videos, such as vlogs, sports events, and movies that can last over an hour. When applied to such videos, they often generate fragmented descriptions focused on isolated actions rather than capturing the broader storyline. Efforts like MA-LMM and LaViLa have extended video captioning to 10-minute clips using LLMs, but hour-long videos remain a challenge due to a shortage of suitable datasets. Although Ego4D introduced a large dataset of hour-long videos, its first-person perspective limits its broader applicability. Video ReCap addressed this gap by training on hour-long videos with multi-granularity annotations, yet this approach is expensive and prone to annotation inconsistencies. In contrast, annotated short-form video datasets are widely available and more user-friendly.

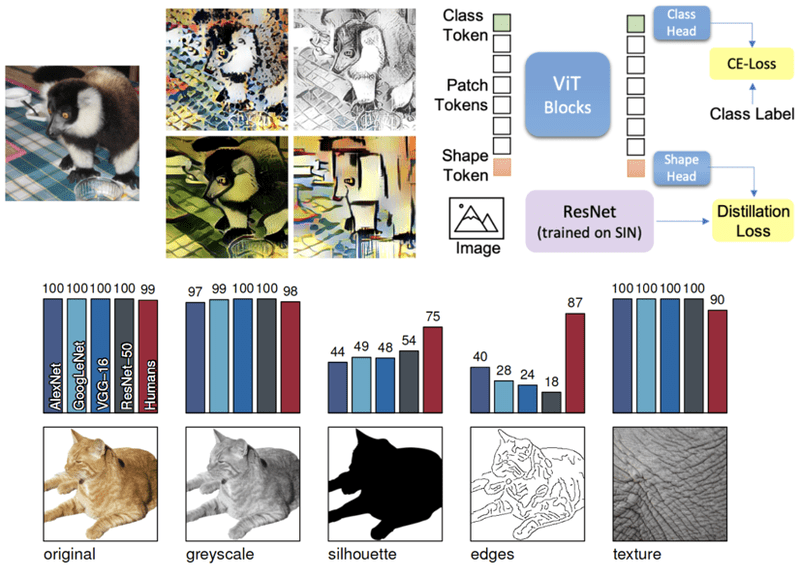

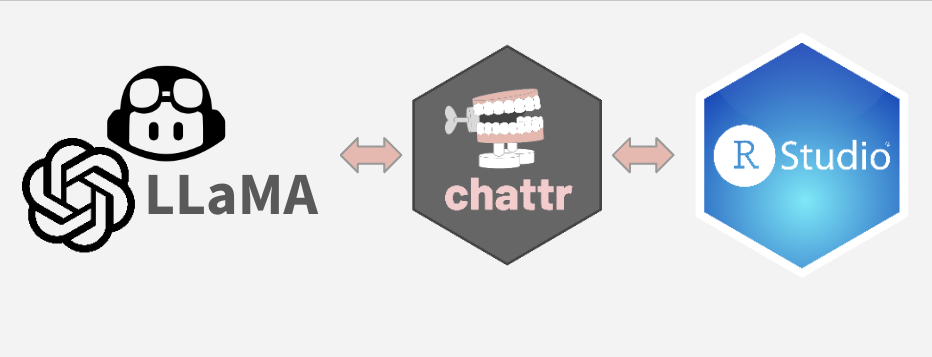

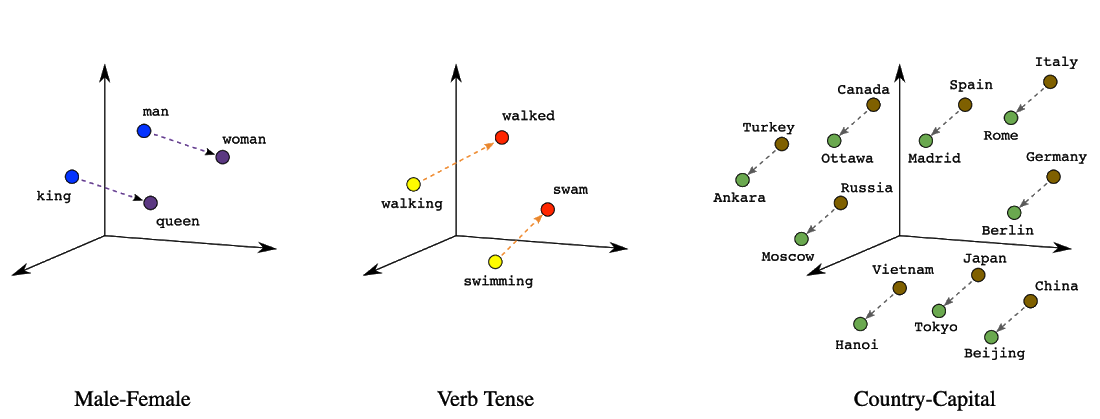

Advancements in visual-language models have significantly enhanced the integration of vision and language tasks, with early works such as CLIP and ALIGN laying the foundation. Subsequent models, such as LLaVA and MiniGPT-4, extended these capabilities to images, while others adapted them for video understanding by focusing on temporal sequence modeling and constructing more robust datasets. Despite these developments, the scarcity of large, annotated long-form video datasets remains a significant hindrance to progress. Traditional short-form video tasks, like video question answering, captioning, and grounding, primarily require spatial or temporal understanding, whereas summarizing hour-long videos demands identifying key frames amidst substantial redundancy. While some models, such as LongVA and LLaVA-Video, can perform VQA on long videos, they struggle with summarization tasks due to data limitations.

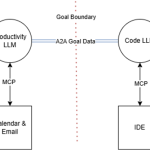

Researchers from Queen Mary University and Spotify introduce ViSMaP, an unsupervised method for summarising hour-long videos without requiring costly annotations. Traditional models perform well on short, pre-segmented videos but struggle with longer content where important events are scattered. ViSMaP bridges this gap by using LLMs and a meta-prompting strategy to iteratively generate and refine pseudo-summaries from clip descriptions created by short-form video models. The process involves three LLMs working in sequence for generation, evaluation, and prompt optimisation. ViSMaP achieves performance comparable to fully supervised models across multiple datasets while maintaining domain adaptability and eliminating the need for extensive manual labelling.

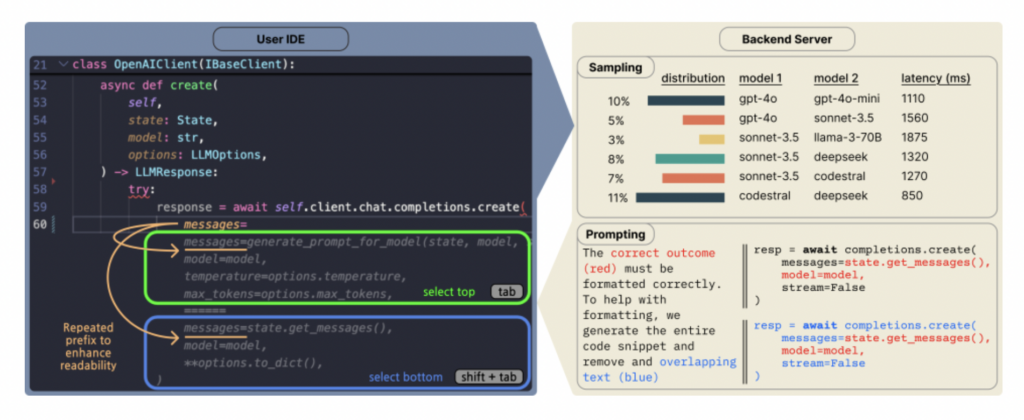

The study addresses cross-domain video summarization by training on a labelled short-form video dataset and adapting to unlabelled, hour-long videos from a different domain. Initially, a model is trained to summarize 3-minute videos using TimeSFormer features, a visual-language alignment module, and a text decoder, optimized by cross-entropy and contrastive losses. To handle longer videos, they are segmented into 3-minute clips, and pseudo-captions are generated. An iterative meta-prompting approach with multiple LLMs (generator, evaluator, optimizer) refines summaries. Finally, the model is fine-tuned on these pseudo-summaries using a symmetric cross-entropy loss to manage noisy labels and improve adaptation.

The study evaluates VisMaP across three scenarios: summarization of long videos using Ego4D-HCap, cross-domain generalization on MSRVTT, MSVD, and YouCook2 datasets, and adaptation to short videos using EgoSchema. VisMaP, trained on hour-long videos, is compared against supervised and zero-shot methods, such as Video ReCap and LaViLa+GPT3.5, demonstrating competitive or superior performance without supervision. Evaluations use CIDEr, ROUGE-L, METEOR scores, and QA accuracy. Ablation studies highlight the benefits of meta-prompting and component modules, such as contrastive learning and SCE loss. Implementation details include the use of TimeSformer, DistilBERT, and GPT-2, with training conducted on an NVIDIA A100 GPU.

In conclusion, ViSMaP is an unsupervised approach for summarizing long videos by utilizing annotated short-video datasets and a meta-prompting strategy. It first creates high-quality summaries through meta-prompting and then trains a summarization model, reducing the need for extensive annotations. Experimental results demonstrate that ViSMaP performs on par with fully supervised methods and adapts effectively across various video datasets. However, its reliance on pseudo labels from a source-domain model may impact performance under significant domain shifts. Additionally, ViSMaP currently relies solely on visual information. Future work could integrate multimodal data, introduce hierarchical summarization, and develop more generalizable meta-prompting techniques.

Check out the Paper. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 90k+ ML SubReddit.

![Apple Seeds Fourth Beta of iOS 18.5 to Developers [Update: Public Beta Available]](https://images.macrumors.com/t/uSxxRefnKz3z3MK1y_CnFxSg8Ak=/2500x/article-new/2025/04/iOS-18.5-Feature-Real-Mock.jpg)

![Apple Seeds Fourth Beta of macOS Sequoia 15.5 [Update: Public Beta Available]](https://images.macrumors.com/t/ne62qbjm_V5f4GG9UND3WyOAxE8=/2500x/article-new/2024/08/macOS-Sequoia-Night-Feature.jpg)

![Apple Seeds watchOS 11.5 Beta 4 to Developers [Download]](https://www.iclarified.com/images/news/97147/97147/97147-640.jpg)

![Apple Seeds visionOS 2.5 Beta 4 to Developers [Download]](https://www.iclarified.com/images/news/97150/97150/97150-640.jpg)

![Apple Seeds tvOS 18.5 Beta 4 to Developers [Download]](https://www.iclarified.com/images/news/97153/97153/97153-640.jpg)

![Apple Releases macOS Sequoia 15.5 Beta 4 to Developers [Download]](https://www.iclarified.com/images/news/97155/97155/97155-640.jpg)

_NicoElNino_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

_Muhammad_R._Fakhrurrozi_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

![[The AI Show Episode 144]: ChatGPT’s New Memory, Shopify CEO’s Leaked “AI First” Memo, Google Cloud Next Releases, o3 and o4-mini Coming Soon & Llama 4’s Rocky Launch](https://www.marketingaiinstitute.com/hubfs/ep%20144%20cover.png)