AI stirs up trouble in the science peer review process

Scientific publishing in confronting an increasingly provocative issue: what do you do about AI in peer review? Ecologist Timothée Poisot recently received a review that was clearly generated by ChatGPT. The document had the following telltale string of words attached: “Here is a revised version of your review with improved clarity and structure.” Poisot was incensed. “I submit a manuscript for review in the hope of getting comments from my peers,” he fumed in a blog post. “If this assumption is not met, the entire social contract of peer review is gone.” Poisot’s experience is not an isolated incident. A The post AI stirs up trouble in the science peer review process appeared first on DailyAI.

Scientific publishing in confronting an increasingly provocative issue: what do you do about AI in peer review?

Ecologist Timothée Poisot recently received a review that was clearly generated by ChatGPT. The document had the following telltale string of words attached: “Here is a revised version of your review with improved clarity and structure.”

Poisot was incensed. “I submit a manuscript for review in the hope of getting comments from my peers,” he fumed in a blog post. “If this assumption is not met, the entire social contract of peer review is gone.”

Poisot’s experience is not an isolated incident. A recent study published in Nature found that up to 17% of reviews for AI conference papers in 2023-24 showed signs of substantial modification by language models.

And in a separate Nature survey, nearly one in five researchers admitted to using AI to speed up and ease the peer review process.

We’ve also seen a few absurd cases of what happens when AI-generated content slips through the peer review process, which is designed to uphold the quality of research.

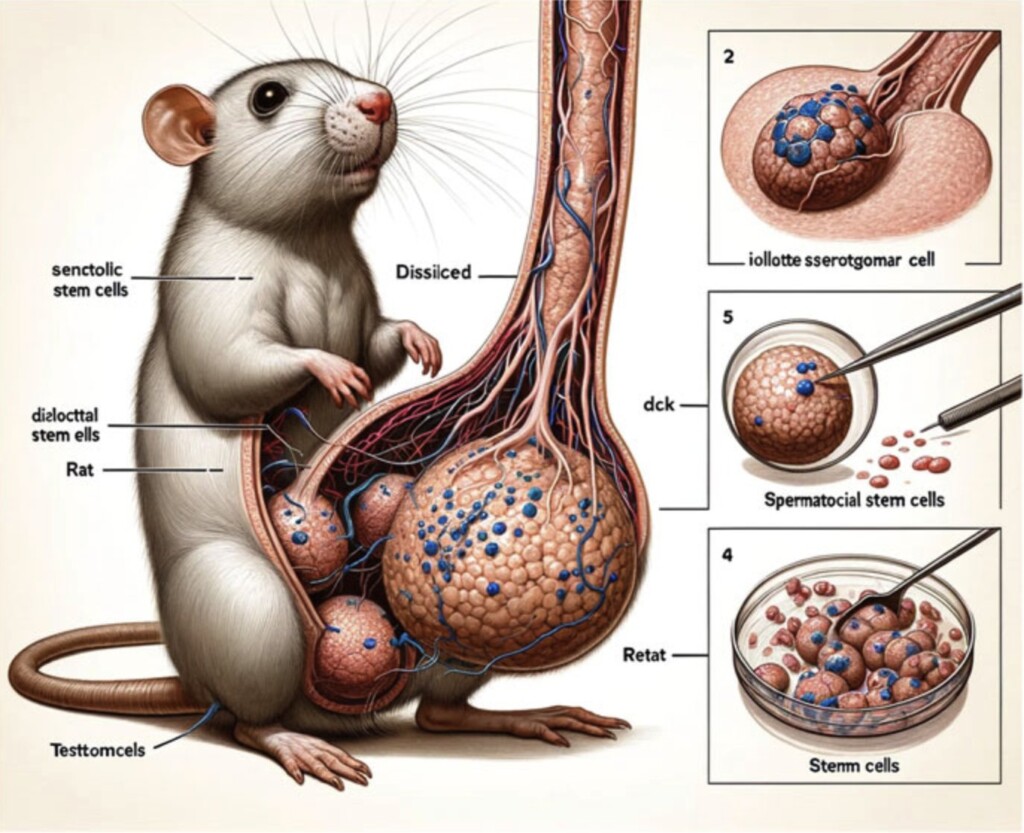

In 2024, a paper published in the Frontiers journal, which explored some highly complex cell signaling pathways, was found to contain bizarre, nonsensical diagrams generated by the AI art tool Midjourney.

One image depicted a deformed rat, while others were just random swirls and squiggles, filled with gibberish text.

Commenters on Twitter were aghast that such obviously flawed figures made it through peer review. “Erm, how did Figure 1 get past a peer reviewer?!” one asked.

In essence, there are two risks: a) peer reviewers using AI to review content, and b) AI-generated content slipping through the entire peer review process.

Publishers are responding to the issues. Elsevier has banned generative AI in peer review outright. Wiley and Springer Nature allow “limited use” with disclosure. A few, like the American Institute of Physics, are gingerly piloting AI tools to supplement – but not supplant – human feedback.

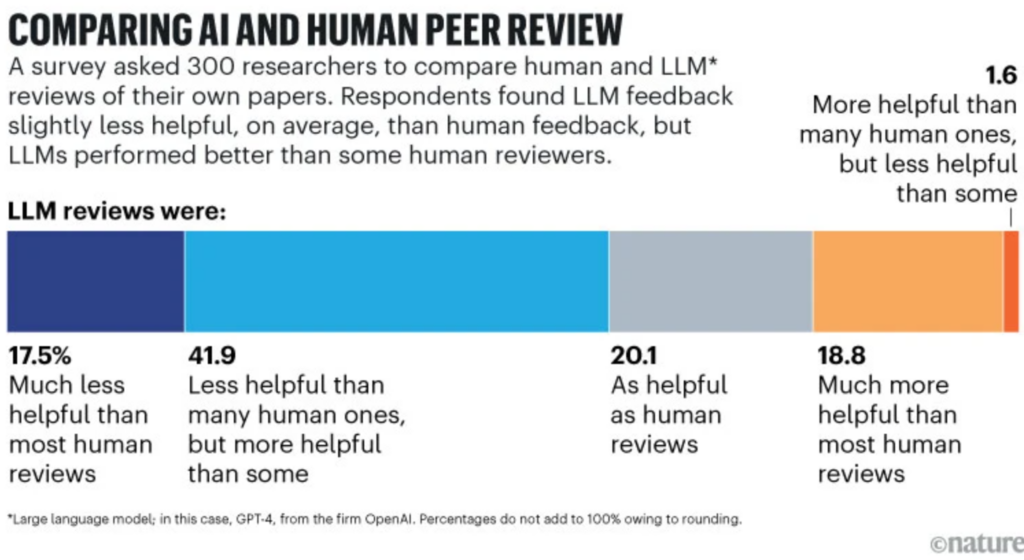

However, gen AI’s allure is strong, and some see the benefits if applied judiciously. A Stanford study found 40% of scientists felt ChatGPT reviews of their work could be as helpful as human ones, and 20% more helpful.

Academia has revolved around human input for a millenia, though, so the resistance is strong. “Not combating automated reviews means we have given up,” Poisot wrote.

The whole point of peer review, many argue, is considered feedback from fellow experts – not an algorithmic rubber stamp.

The post AI stirs up trouble in the science peer review process appeared first on DailyAI.

![Lowest Prices Ever: Apple Pencil Pro Just $79.99, USB-C Pencil Only $49.99 [Deal]](https://www.iclarified.com/images/news/96863/96863/96863-640.jpg)

![Apple Releases iOS 18.4 RC 2 and iPadOS 18.4 RC 2 to Developers [Download]](https://www.iclarified.com/images/news/96860/96860/96860-640.jpg)

![What Google Messages features are rolling out [March 2025]](https://i0.wp.com/9to5google.com/wp-content/uploads/sites/4/2023/12/google-messages-name-cover.png?resize=1200%2C628&quality=82&strip=all&ssl=1)

![[The AI Show Episode 141]: Road to AGI (and Beyond) #1 — The AI Timeline is Accelerating](https://www.marketingaiinstitute.com/hubfs/ep%20141.1.png)

![[The AI Show Episode 140]: New AGI Warnings, OpenAI Suggests Government Policy, Sam Altman Teases Creative Writing Model, Claude Web Search & Apple’s AI Woes](https://www.marketingaiinstitute.com/hubfs/ep%20140%20cover.png)

![[The AI Show Episode 139]: The Government Knows AGI Is Coming, Superintelligence Strategy, OpenAI’s $20,000 Per Month Agents & Top 100 Gen AI Apps](https://www.marketingaiinstitute.com/hubfs/ep%20139%20cover-2.png)

![From broke musician to working dev. How college drop-out Ryan Furrer taught himself to code [Podcast #166]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743189826063/2080cde4-6fc0-46fb-b98d-b3d59841e8c4.png?#)

![[FREE EBOOKS] The Ultimate Linux Shell Scripting Guide, Artificial Intelligence for Cybersecurity & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

.png?#)

![Mini Review: Rendering Ranger: R2 [Rewind] (Switch) - A Novel Run 'N' Gun/Shooter Hybrid That's Finally Affordable](https://images.nintendolife.com/0e9d68643dde0/large.jpg?#)