Unleashing the Power of Agentic AI: How Autonomous Agents are transforming Cybersecurity and Application Security

Introduction The ever-changing landscape of cybersecurity, in which threats are becoming more sophisticated every day, companies are using AI (AI) to bolster their defenses. AI, which has long been part of cybersecurity, is now being transformed into an agentic AI and offers proactive, adaptive and context aware security. This article focuses on the revolutionary potential of AI with a focus on its application in the field of application security (AppSec) and the pioneering concept of AI-powered automatic vulnerability-fixing. Cybersecurity The rise of artificial intelligence (AI) that is agent-based Agentic AI is a term used to describe goals-oriented, autonomous systems that recognize their environment take decisions, decide, and implement actions in order to reach the goals they have set for themselves. Unlike traditional rule-based or reacting AI, agentic systems are able to adapt and learn and work with a degree of detachment. In the context of security, autonomy is translated into AI agents that are able to continuously monitor networks and detect anomalies, and respond to attacks in real-time without continuous human intervention. Agentic AI's potential in cybersecurity is vast. Utilizing machine learning algorithms and vast amounts of information, these smart agents are able to identify patterns and relationships which human analysts may miss. The intelligent AI systems can cut out the noise created by numerous security breaches and prioritize the ones that are most significant and offering information to help with rapid responses. Agentic AI systems are able to grow and develop their capabilities of detecting threats, as well as responding to cyber criminals and their ever-changing tactics. Agentic AI and Application Security While agentic AI has broad application in various areas of cybersecurity, its impact in the area of application security is noteworthy. With more and more organizations relying on interconnected, complex software systems, safeguarding these applications has become an absolute priority. ongoing ai security testing like regular vulnerability scanning as well as manual code reviews do not always keep up with rapid cycle of development. Agentic AI is the new frontier. Through the integration of intelligent agents in the lifecycle of software development (SDLC) companies can transform their AppSec methods from reactive to proactive. These AI-powered agents can continuously monitor code repositories, analyzing each commit for potential vulnerabilities and security flaws. They may employ advanced methods including static code analysis testing dynamically, and machine learning to identify a wide range of issues including common mistakes in coding to little-known injection flaws. The agentic AI is unique in AppSec as it has the ability to change to the specific context of every app. Agentic AI can develop an in-depth understanding of application design, data flow as well as attack routes by creating an exhaustive CPG (code property graph) an elaborate representation of the connections among code elements. The AI is able to rank vulnerability based upon their severity in real life and what they might be able to do, instead of relying solely on a generic severity rating. Artificial Intelligence Powers Automatic Fixing The idea of automating the fix for flaws is probably the most fascinating application of AI agent AppSec. Human programmers have been traditionally in charge of manually looking over code in order to find the vulnerabilities, learn about the problem, and finally implement fixing it. This process can be time-consuming in addition to error-prone and frequently causes delays in the deployment of essential security patches. It's a new game with the advent of agentic AI. Through the use of the in-depth knowledge of the codebase offered by the CPG, AI agents can not just identify weaknesses, as well as generate context-aware non-breaking fixes automatically. AI agents that are intelligent can look over the source code of the flaw to understand the function that is intended and then design a fix which addresses the security issue while not introducing bugs, or affecting existing functions. AI-powered automation of fixing can have profound implications. The time it takes between discovering a vulnerability and resolving the issue can be significantly reduced, closing the door to hackers. It reduces the workload for development teams and allow them to concentrate in the development of new features rather then wasting time fixing security issues. Automating the process of fixing vulnerabilities will allow organizations to be sure that they're using a reliable method that is consistent that reduces the risk for oversight and human error. What are the challenges and issues to be considered? It is vital to acknowledge the threats and risks associated with the use of AI agents in AppSec as well as cybersecurity. In https://www.linkedin.com/posts/qwiet_qwiet-ai

Introduction

The ever-changing landscape of cybersecurity, in which threats are becoming more sophisticated every day, companies are using AI (AI) to bolster their defenses. AI, which has long been part of cybersecurity, is now being transformed into an agentic AI and offers proactive, adaptive and context aware security. This article focuses on the revolutionary potential of AI with a focus on its application in the field of application security (AppSec) and the pioneering concept of AI-powered automatic vulnerability-fixing.

Cybersecurity The rise of artificial intelligence (AI) that is agent-based

Agentic AI is a term used to describe goals-oriented, autonomous systems that recognize their environment take decisions, decide, and implement actions in order to reach the goals they have set for themselves. Unlike traditional rule-based or reacting AI, agentic systems are able to adapt and learn and work with a degree of detachment. In the context of security, autonomy is translated into AI agents that are able to continuously monitor networks and detect anomalies, and respond to attacks in real-time without continuous human intervention.

Agentic AI's potential in cybersecurity is vast. Utilizing machine learning algorithms and vast amounts of information, these smart agents are able to identify patterns and relationships which human analysts may miss. The intelligent AI systems can cut out the noise created by numerous security breaches and prioritize the ones that are most significant and offering information to help with rapid responses. Agentic AI systems are able to grow and develop their capabilities of detecting threats, as well as responding to cyber criminals and their ever-changing tactics.

Agentic AI and Application Security

While agentic AI has broad application in various areas of cybersecurity, its impact in the area of application security is noteworthy. With more and more organizations relying on interconnected, complex software systems, safeguarding these applications has become an absolute priority. ongoing ai security testing like regular vulnerability scanning as well as manual code reviews do not always keep up with rapid cycle of development.

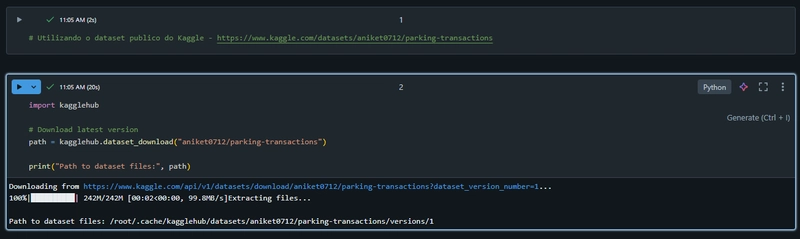

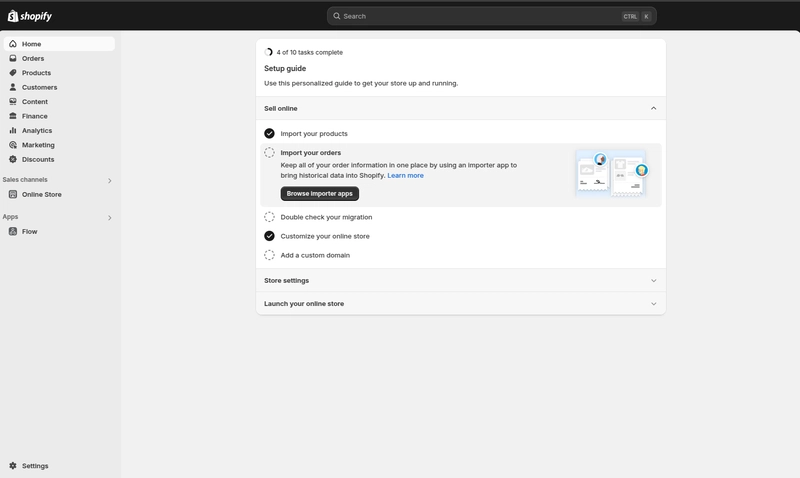

Agentic AI is the new frontier. Through the integration of intelligent agents in the lifecycle of software development (SDLC) companies can transform their AppSec methods from reactive to proactive. These AI-powered agents can continuously monitor code repositories, analyzing each commit for potential vulnerabilities and security flaws. They may employ advanced methods including static code analysis testing dynamically, and machine learning to identify a wide range of issues including common mistakes in coding to little-known injection flaws.

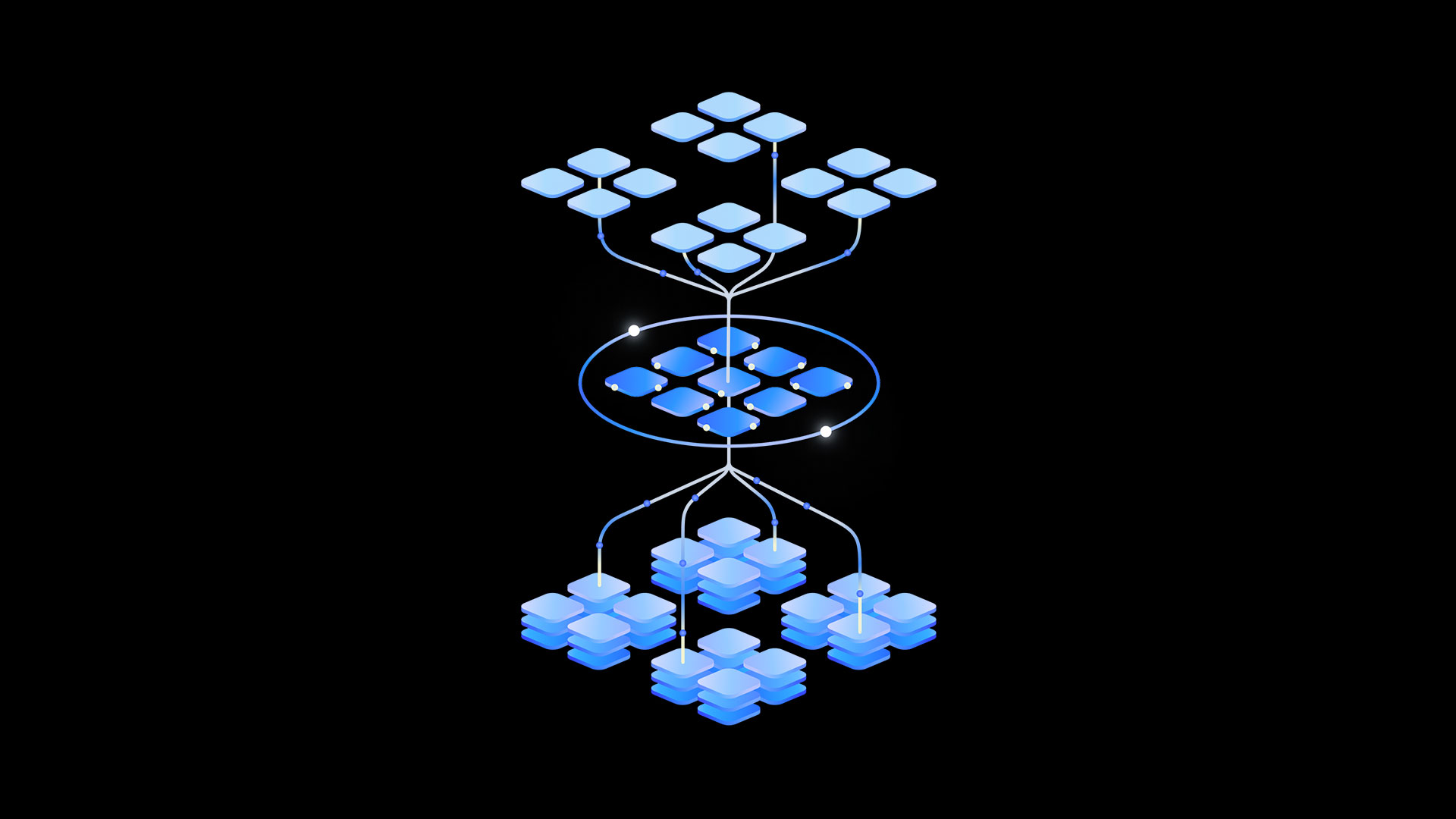

The agentic AI is unique in AppSec as it has the ability to change to the specific context of every app. Agentic AI can develop an in-depth understanding of application design, data flow as well as attack routes by creating an exhaustive CPG (code property graph) an elaborate representation of the connections among code elements. The AI is able to rank vulnerability based upon their severity in real life and what they might be able to do, instead of relying solely on a generic severity rating.

Artificial Intelligence Powers Automatic Fixing

The idea of automating the fix for flaws is probably the most fascinating application of AI agent AppSec. Human programmers have been traditionally in charge of manually looking over code in order to find the vulnerabilities, learn about the problem, and finally implement fixing it. This process can be time-consuming in addition to error-prone and frequently causes delays in the deployment of essential security patches.

It's a new game with the advent of agentic AI. Through the use of the in-depth knowledge of the codebase offered by the CPG, AI agents can not just identify weaknesses, as well as generate context-aware non-breaking fixes automatically. AI agents that are intelligent can look over the source code of the flaw to understand the function that is intended and then design a fix which addresses the security issue while not introducing bugs, or affecting existing functions.

AI-powered automation of fixing can have profound implications. The time it takes between discovering a vulnerability and resolving the issue can be significantly reduced, closing the door to hackers. It reduces the workload for development teams and allow them to concentrate in the development of new features rather then wasting time fixing security issues. Automating the process of fixing vulnerabilities will allow organizations to be sure that they're using a reliable method that is consistent that reduces the risk for oversight and human error.

What are the challenges and issues to be considered?

It is vital to acknowledge the threats and risks associated with the use of AI agents in AppSec as well as cybersecurity. In https://www.linkedin.com/posts/qwiet_qwiet-ai-webinar-series-ai-autofix-the-activity-7202016247830491136-ax4v of accountability and trust is an essential one. Organisations need to establish clear guidelines in order to ensure AI acts within acceptable boundaries as AI agents grow autonomous and can take the decisions for themselves. ai vs manual security includes implementing robust testing and validation processes to check the validity and reliability of AI-generated fix.

A second challenge is the risk of an the possibility of an adversarial attack on AI. The attackers may attempt to alter data or take advantage of AI weakness in models since agents of AI techniques are more widespread in cyber security. This underscores the importance of safe AI development practices, including techniques like adversarial training and modeling hardening.

The accuracy and quality of the diagram of code properties can be a significant factor for the successful operation of AppSec's agentic AI. Maintaining and constructing an accurate CPG will require a substantial expenditure in static analysis tools as well as dynamic testing frameworks and pipelines for data integration. It is also essential that organizations ensure their CPGs are continuously updated to keep up with changes in the codebase and evolving threats.

The future of Agentic AI in Cybersecurity

In spite of the difficulties that lie ahead, the future of AI in cybersecurity looks incredibly positive. It is possible to expect more capable and sophisticated autonomous agents to detect cyber-attacks, react to them, and diminish their effects with unprecedented speed and precision as AI technology continues to progress. With regards to AppSec agents, AI-based agentic security has the potential to transform how we create and protect software. It will allow enterprises to develop more powerful, resilient, and secure applications.

The introduction of AI agentics in the cybersecurity environment can provide exciting opportunities to collaborate and coordinate security processes and tools. Imagine a world where agents are self-sufficient and operate across network monitoring and incident reaction as well as threat analysis and management of vulnerabilities. They could share information as well as coordinate their actions and give proactive cyber security.

As we progress we must encourage organizations to embrace the potential of autonomous AI, while taking note of the social and ethical implications of autonomous technology. We can use the power of AI agentics to create security, resilience and secure digital future through fostering a culture of responsibleness in AI creation.

The article's conclusion can be summarized as:

Agentic AI is a significant advancement within the realm of cybersecurity. It's a revolutionary model for how we detect, prevent attacks from cyberspace, as well as mitigate them. The ability of an autonomous agent specifically in the areas of automatic vulnerability repair and application security, could help organizations transform their security posture, moving from a reactive strategy to a proactive security approach by automating processes moving from a generic approach to context-aware.

Agentic AI has many challenges, yet the rewards are too great to ignore. In the midst of pushing AI's limits in cybersecurity, it is important to keep a mind-set that is constantly learning, adapting of responsible and innovative ideas. It is then possible to unleash the power of artificial intelligence for protecting digital assets and organizations.ai vs manual security

![Tariffs Threaten Apple's $999 iPhone Price Point in the U.S. [Gurman]](https://www.iclarified.com/images/news/96943/96943/96943-640.jpg)

![iPhone 17 Pro Won't Feature Two-Toned Back [Gurman]](https://www.iclarified.com/images/news/96944/96944/96944-640.jpg)

![New Apple iPad mini 7 On Sale for $399! [Lowest Price Ever]](https://www.iclarified.com/images/news/96096/96096/96096-640.jpg)

(1).webp?#)

_Christophe_Coat_Alamy.jpg?#)

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

![[DEALS] The Premium Learn to Code Certification Bundle (97% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

.jpg?#)

.png?#)