Enhancing Strategic Decision-Making in Gomoku Using Large Language Models and Reinforcement Learning

LLMs have significantly advanced NLP, demonstrating strong text generation, comprehension, and reasoning capabilities. These models have been successfully applied across various domains, including education, intelligent decision-making, and gaming. LLMs serve as interactive tutors in education, aiding personalized learning and improving students’ reading and writing skills. In decision-making, they analyze large datasets to generate insights for […] The post Enhancing Strategic Decision-Making in Gomoku Using Large Language Models and Reinforcement Learning appeared first on MarkTechPost.

LLMs have significantly advanced NLP, demonstrating strong text generation, comprehension, and reasoning capabilities. These models have been successfully applied across various domains, including education, intelligent decision-making, and gaming. LLMs serve as interactive tutors in education, aiding personalized learning and improving students’ reading and writing skills. In decision-making, they analyze large datasets to generate insights for complex problems. LLMs enhance player experiences by generating dynamic content and facilitating strategy development within gaming. However, despite these successes, their application to intricate tasks such as strategic gameplay in Gomoku remains challenging. Gomoku, a classic board game known for its simple rules yet deep strategic complexity, presents difficulties for both traditional search-based methods, which are computationally expensive, and machine learning approaches, which often struggle with efficiency. This has led researchers to explore how LLMs can be integrated with deep learning and reinforcement learning to develop an AI capable of making rational strategic decisions in Gomoku.

Research on LLM applications in gaming has taken multiple directions, including evaluating model competency in simple deterministic games like Tic-Tac-Toe and assessing their strategic reasoning in more complex environments. Studies suggest that LLMs perform better in probabilistic games than in deterministic, complete-information settings, which presents challenges for games like Gomoku that demand deep spatial reasoning. Theoretical insights from game theory have examined LLMs’ ability to engage in strategic decision-making, while empirical studies emphasize the importance of prompt engineering in shaping their gameplay strategies. Despite advancements in multi-game evaluations, a notable gap persists between LLMs and human-level strategic reasoning. Addressing this limitation requires refining reinforcement learning frameworks to improve decision-making efficiency, ultimately bridging the gap between LLM-based agents and expert human players in strategic board games like Gomoku.

Researchers from Peking University have developed a Gomoku AI system based on LLMs that mimics human learning to enhance strategic decision-making. The system enables the model to interpret the board state, understand the game rules, select strategies, and evaluate positions. By incorporating self-play and reinforcement learning, the AI refines its move selection, avoids illegal moves, and improves efficiency through parallel position evaluation. Extensive training has significantly enhanced its gameplay, allowing it to adapt strategies dynamically. This approach demonstrates that LLMs can effectively learn and apply complex game strategies, making them valuable tools for strategic gameplay development.

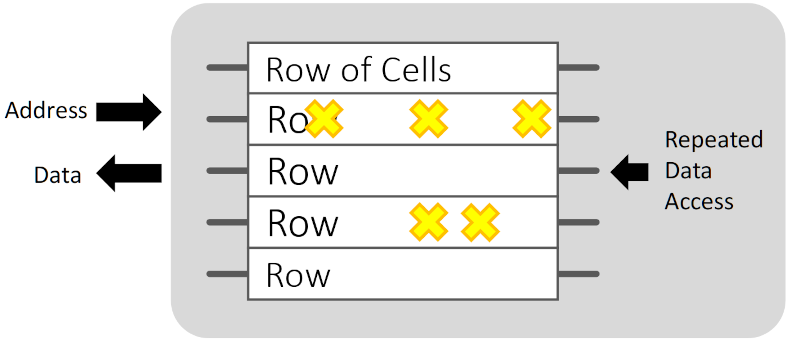

The implementation of the Gomoku AI system is structured into five key components: prompt design, strategy selection, position evaluation, self-play, and reinforcement learning. A specialized prompt template enables LLMs to simulate human decision-making by incorporating board state, game rules, and strategic logic. The model selects from 52 strategies and nine analytical methods to refine its gameplay. To prevent illegal moves, a local position evaluation method scores legal positions for optimal selection. Self-play enhances strategic adaptability, while reinforcement learning with Deep Q-networks introduces per-turn rewards to accelerate learning efficiency. This integrated approach significantly improves Gomoku AI’s decision-making and performance.

A parallel framework using Ray accelerates local position evaluation to enhance efficiency, reducing move time from 150 to 28 seconds. A state-action-reward database preserves self-play data, preventing progress loss due to API failures. A visualization module graphically represents moves and strategies for clarity. The model, trained through 1,046 self-play games with a Deep Q-Network, significantly outperforms Zero-shot, Few-shot, and Chain-of-Thought methods. Performance evaluation includes human assessment and survival step testing against AlphaZero, showing improved strategic accuracy and gameplay durability. Training over 1,000 episodes leads to notable performance gains, demonstrating the method’s effectiveness.

In conclusion, despite its success, the model faces challenges such as slow self-play learning and limited strategy depth due to selecting only one strategy and analytical logic per move. Future improvements include combining multiple strategies for deeper analysis, leveraging advanced reinforcement learning methods like Deep Deterministic Policy Gradient, and incorporating multi-agent systems. Using AlphaZero’s results may further refine decision-making. The study demonstrates how LLMs can effectively play Gomoku through strategic reasoning and reinforcement learning, improving decision speed and accuracy. Future research will focus on optimizing strategy selection and integrating vision-language models for enhanced performance.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 85k+ ML SubReddit.

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![Apple Faces New Tariffs but Has Options to Soften the Blow [Kuo]](https://www.iclarified.com/images/news/96921/96921/96921-640.jpg)

![Fitbit redesigns Water stats and logging on Android, iOS [U]](https://i0.wp.com/9to5google.com/wp-content/uploads/sites/4/2023/03/fitbit-logo-2.jpg?resize=1200%2C628&quality=82&strip=all&ssl=1)

_Anthony_Brown_Alamy.jpg?#)

_Hanna_Kuprevich_Alamy.jpg?#)

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![Is this a suitable approach to architect a flutter app? [closed]](https://i.sstatic.net/4hMHGb1L.png)

![[DEALS] Microsoft Office Professional 2021 for Windows: Lifetime License (75% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)